EdgeAI made simple – Exploring Image Classification with Arduino Portenta, Edge Impulse, and OpenMV

Introduction

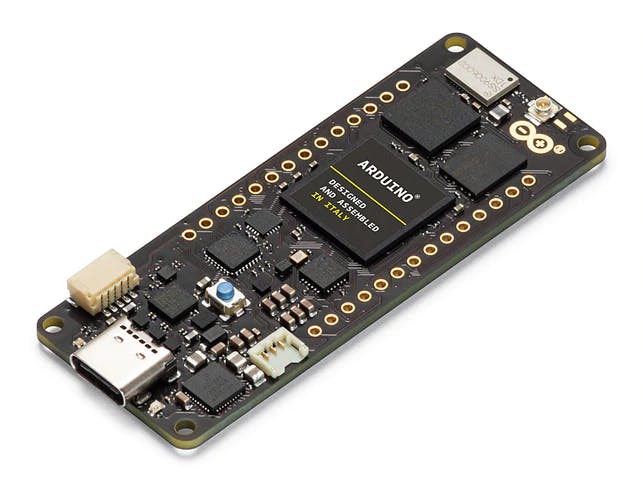

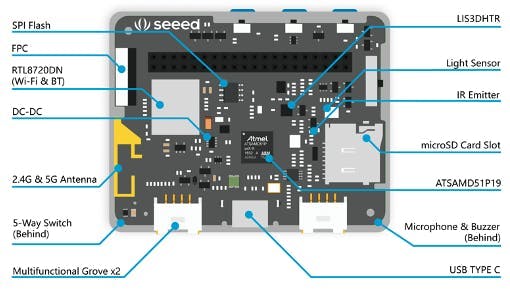

This tutorial explores the Arduino Portenta, a development board that includes two processors that can run tasks in parallel. Portenta can efficiently run processes created with TensorFlow™ Lite. For example, one of the cores computing a computer vision algorithm on the fly (inference), having the other leading with low-level operations like controlling a motor and communicating or acting as a user interface.

The onboard wireless module allows the management of WiFi and Bluetooth® connectivity simultaneously.

Two Parallel Cores

H7’s central processor is the dual-core STM32H747, including a Cortex® M7 running at 480 MHz and a Cortex® M4 running at 240 MHz. The two cores communicate via a Remote Procedure Call mechanism that seamlessly allows calling functions on the other processor. Both processors share all the on-chip peripherals and can run:

- Arduino sketches on top of the Arm® Mbed™ OS

- Native Mbed™ applications

- MicroPython / JavaScript via an interpreter

- TensorFlow™ Lite

Memory

Memory is crucial for embedded machine Learning projects. Portenta H7 board can host up to 64 MB of SDRAM and 128 MB of QSPI Flash. In my case, my board comes with 8MB of SDRAM and 16MB of Flash QSPI. But it is essential to consider that the MCU SRAM is the one to be used with machine learning inferences; that for the STM32H747 is only 1MB. This MCU also has incorporated 2MB of FLASH, mainly for code storage.

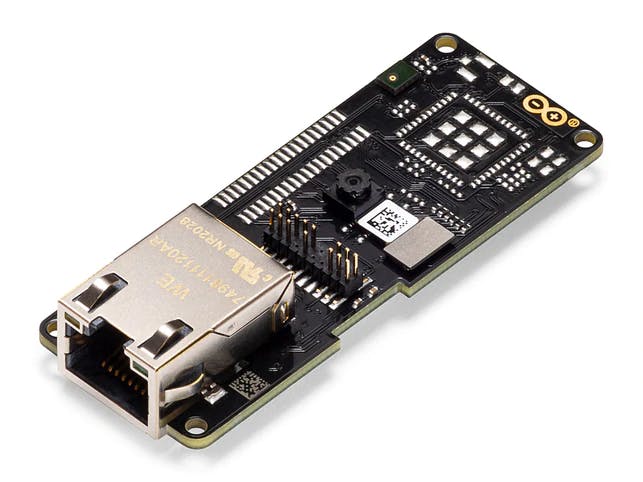

Vision Shield

We will add a Vision Shield to our Portenta board for use in vision applications, which brings industry-rated features, like Ethernet (or LoRa), camera, and microphones.

- Camera: Ultra-low-power Himax HM-01B0 monochrome camera module with 320 x 320 active pixel resolution support for QVGA.

- Microphone: 2 x MP34DT05, an ultra-compact, low-power, omnidirectional, digital MEMS microphone built with a capacitive sensing element and an IC interface.

No vídeo, voce terá uma idéia de como ficará o projeto final:

No vídeo, voce terá uma idéia de como ficará o projeto final: